The AI & Human Rights Index is a global research project of the AI Ethics Lab at Rutgers University, to be published by All Tech Is Human, that examines how artificial intelligence can violate or advance human rights.

Editors from four continents are working with contributors worldwide to develop a comprehensive legal framework that serves as a guiding structure for evaluating AI’s impact across societal sectors.

The Index draws on eight decades of human rights law to organize the relationships among rights, the instruments used to measure and enforce those rights, the AI ethics that help identify and address gaps in existing law, their application across societal sectors, and AI’s negative and positive impacts, especially on vulnerable populations. At its core, this work is grounded in the principle that human dignity is the foundation of all rights.

The Index emphasizes both harm prevention (nonmaleficence) and measurable positive outcomes (beneficence), equipping societal sectors with frameworks to evaluate and strengthen accountability so that AI benefits humanity and the environment.

The Index researchers apply a Solutions Scholarship methodology to interrogate and critique AI while committing to identifying measurable solutions for every problem analyzed. This approach embeds solutions-focused research into each article and supports the development of technical, cultural, and legal literacy through multimedia, interactive, and structured knowledge systems accessible to the public.

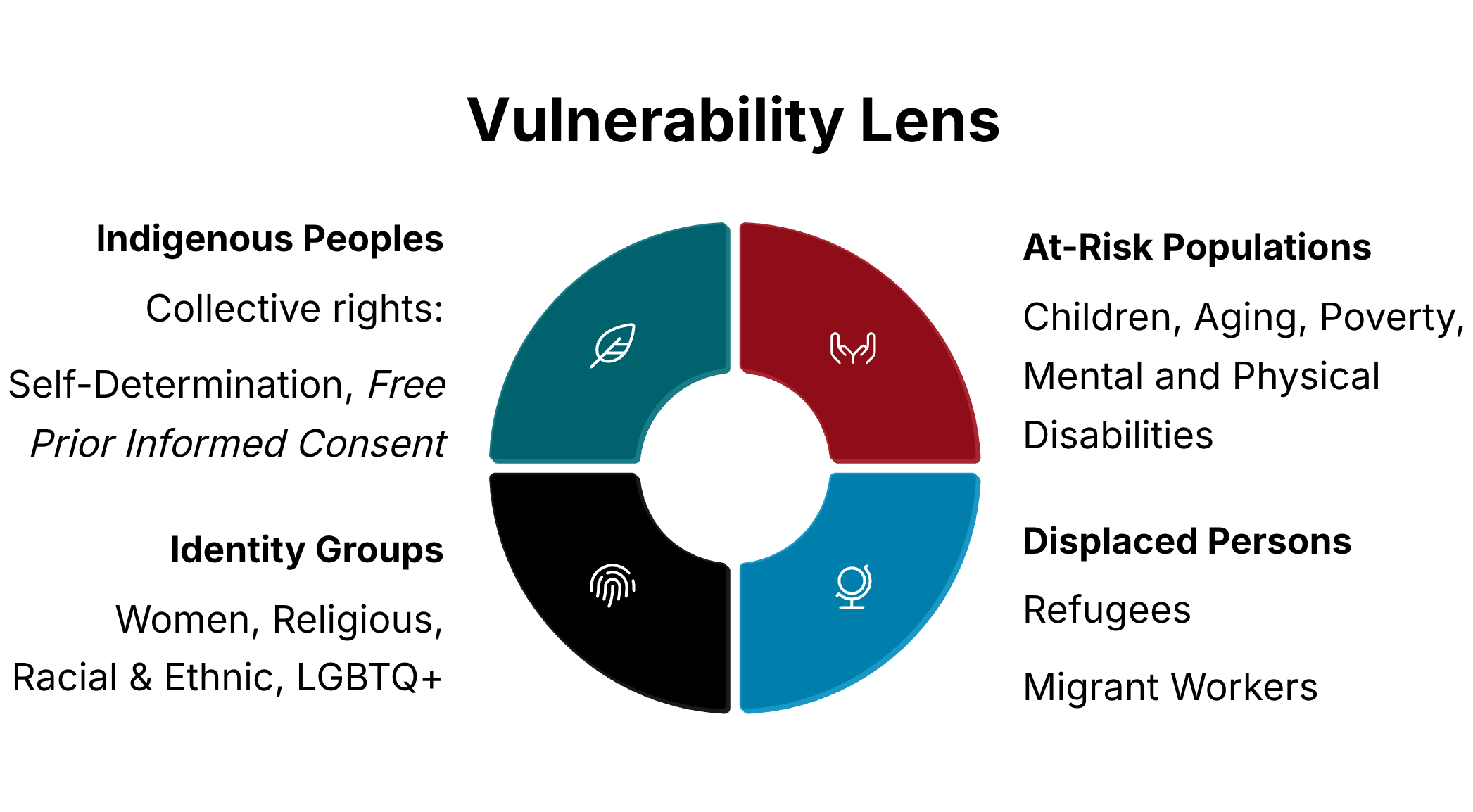

The Index applies what the United Nations calls a “vulnerability lens,” prioritizing populations at heightened risk of harm while identifying opportunities for increased protection and empowerment.

In advancing this mission, the Index is organized around the following research questions:

Which specific human rights are most at risk, and which ones could AI benefit? What cultural contexts and regional approaches shape how these rights are interpreted?

Which populations, already susceptible to human rights abuses, are most affected by AI systems? How can AI violate or protect their fundamental rights?

What international laws and frameworks can be used to assess AI’s impact on human rights at every stage of its lifecycle, from development and deployment to monitoring?

Which sectors of society are responsible for ensuring that AI systems protect and promote human rights?

What legal, technical, and ethical terms are essential for engaging in this interdisciplinary, global conversation?

Building on this legal foundation, the Index is designed to move from analysis to implementation through a technical and governance system. The Index organizes the relationships among rights, instruments, principles, sectors, and vulnerable populations. This framework is then translated into a machine-readable Protocol, with the ultimate objective of building the AI & Human Rights Governance Flywheel known as CHARTER.

The Protocol is a semantic infrastructure that translates the Index into a structured technical system for evaluating how AI systems may both violate and advance human rights.

SKOS enables the organization of concept schemes, the labeling of related ideas, the identification of semantic relationships, and the preparation of documentary notes.

OWL supports the articulation of formal ontologies, the definition of logical constraints, the creation of inference-based class definitions, and the specification of property restrictions. It is used with care, recognizing that human rights are continually contested and reinterpreted across legal and cultural contexts.

CHARTER, Classifying Human Rights Advancements for Responsible Technology and Ethical Response, serves as a continuous feedback system, or governance flywheel. The process unfolds in a structured cycle:

Through this iterative process, CHARTER enables ongoing evaluation, refinement, and governance of AI systems in relation to human rights.

The publication, AI & Human Rights Index, is the result of an international collaboration among individual researchers affiliated with:

Recommended Citation: Nathan C. Walker, Dirk Brand, Caitlin Corrigan, Georgina Curto Rex, Alexander Kriebitz, John Maldonado, Kanshukan Rajaratnam, Tanya de Villiers-Botha, and Hisham Zawil, eds. AI & Human Rights Index. New York: All Tech is Human; Camden, NJ: AI Ethics Lab at Rutgers University, 2026. aiethicslab.rutgers.edu/index.